Current Projects

Unnaturally natural diets

This project maps out the contours of the idea of “natural” diets in the long history of Western medical tradition, with an emphasis on the last few centuries of industrialized food. Perhaps because the ideas of natural foods and diets have become so ubiquitous, their long and complex history has been overlooked. Yet the way definitions and debates about our natural diet have shifted over time provides a revealing lens onto popular relationships between nature, technology, and modernity.

Higher Ed + AI

AI is here and is not going away. From a teaching standpoint, higher education (at least) must train students to use it ethicially and skillfully. How can humanities instructors take advantage of AI to strengthen critical thinking?

From a research standpoint, AI is challenging conventional notions of expertise. What are the implications for the “expert” historian? How can historians employ AI for menial tasks and free time and energy for more creative thinking? How can AI be leveraged for its broad (if shallow) knowledge that could open new avenues for research?

Amaranth

In the summer of 2025, PhD student Jonathan Seyfried and I launched Amaranth, UNM’s Digital Humanities and Public Scholarship Studio. We strive to harness innovative digital technologies to facilitate engagement with the humanities. We empower scholars, educators, and communities to create immersive storytelling experiences that invite critical engagement with core humanities issues. Through collaborative projects, open-access resources, and sustainable digital platforms, we bridge academic research with public discourse.

Digital Pedagogy via Xanthan

Discourse in public digital history has often called for more open source tools that facilitate digital pedagogy, literacy, and sustinable, collaborative work. Yet faculty and students have had few options between ad-saturated versions of web hosting software or proprietary tools.

With an emphasis on simplicity, sustainability, and thorough documentation, Xanthan provides an accessible jumping off point for public digital scholarship. There are ample templates and instructions for anyone to create their own website with free, simple, and mostly code-free text files.

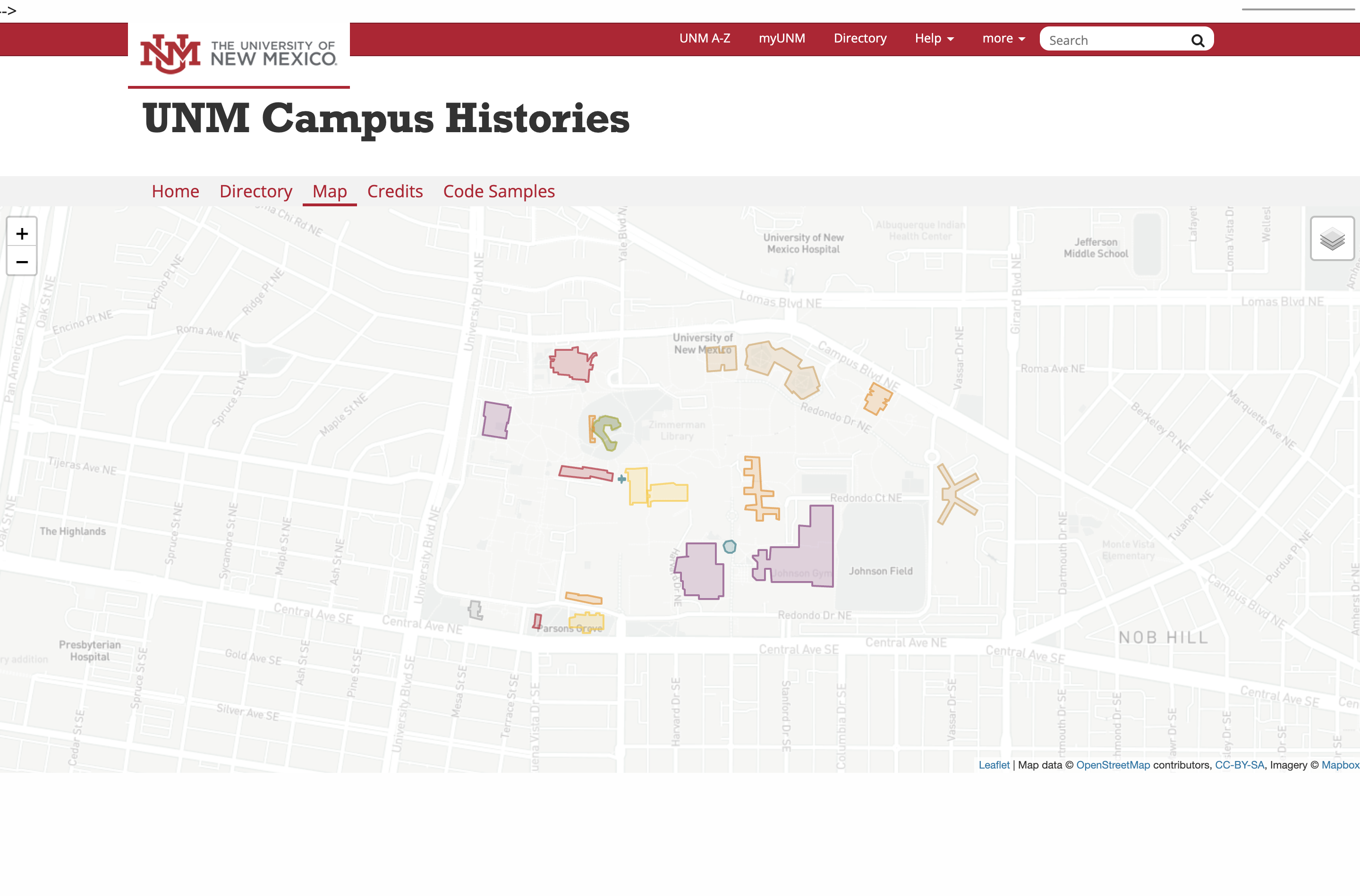

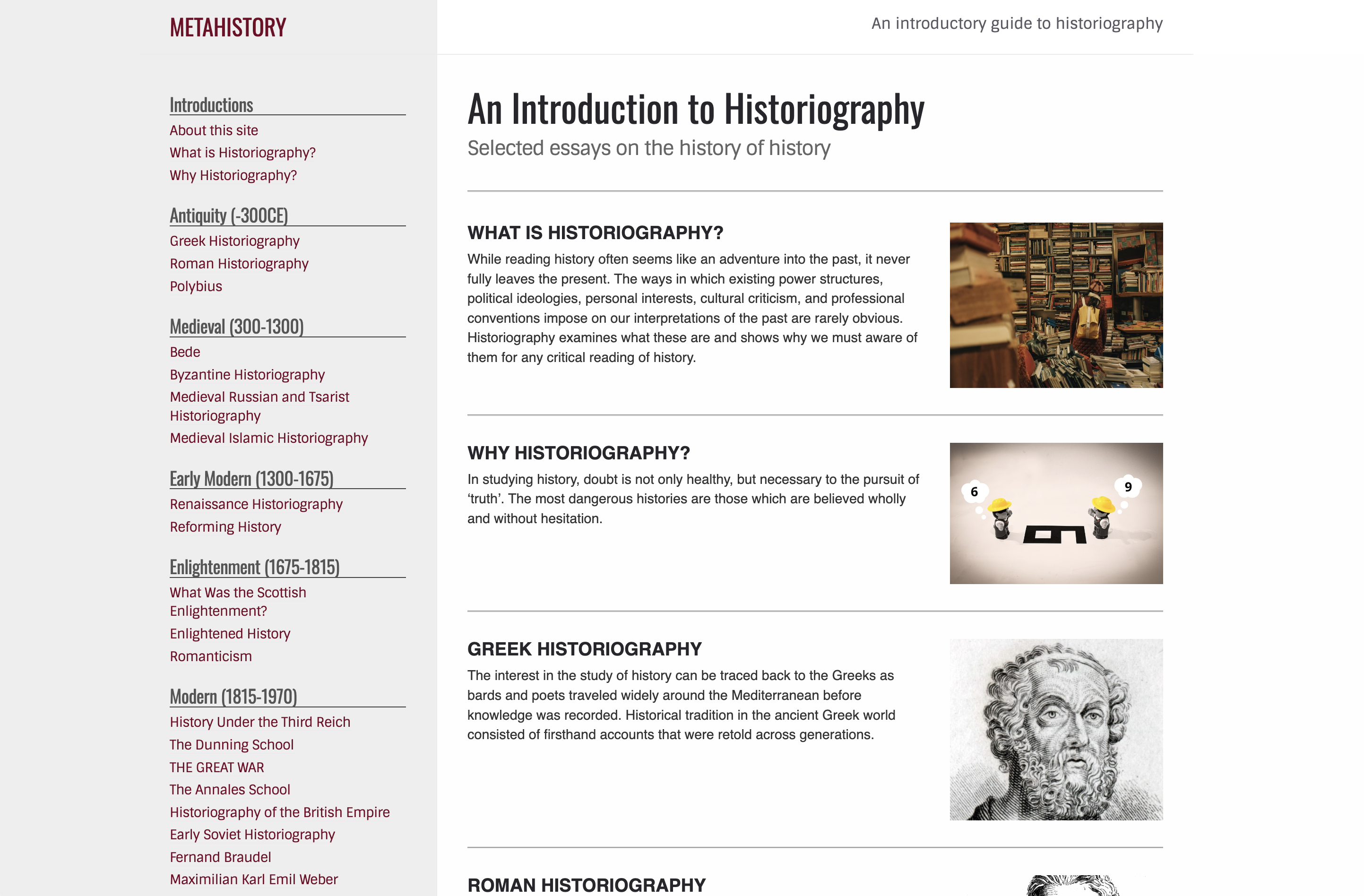

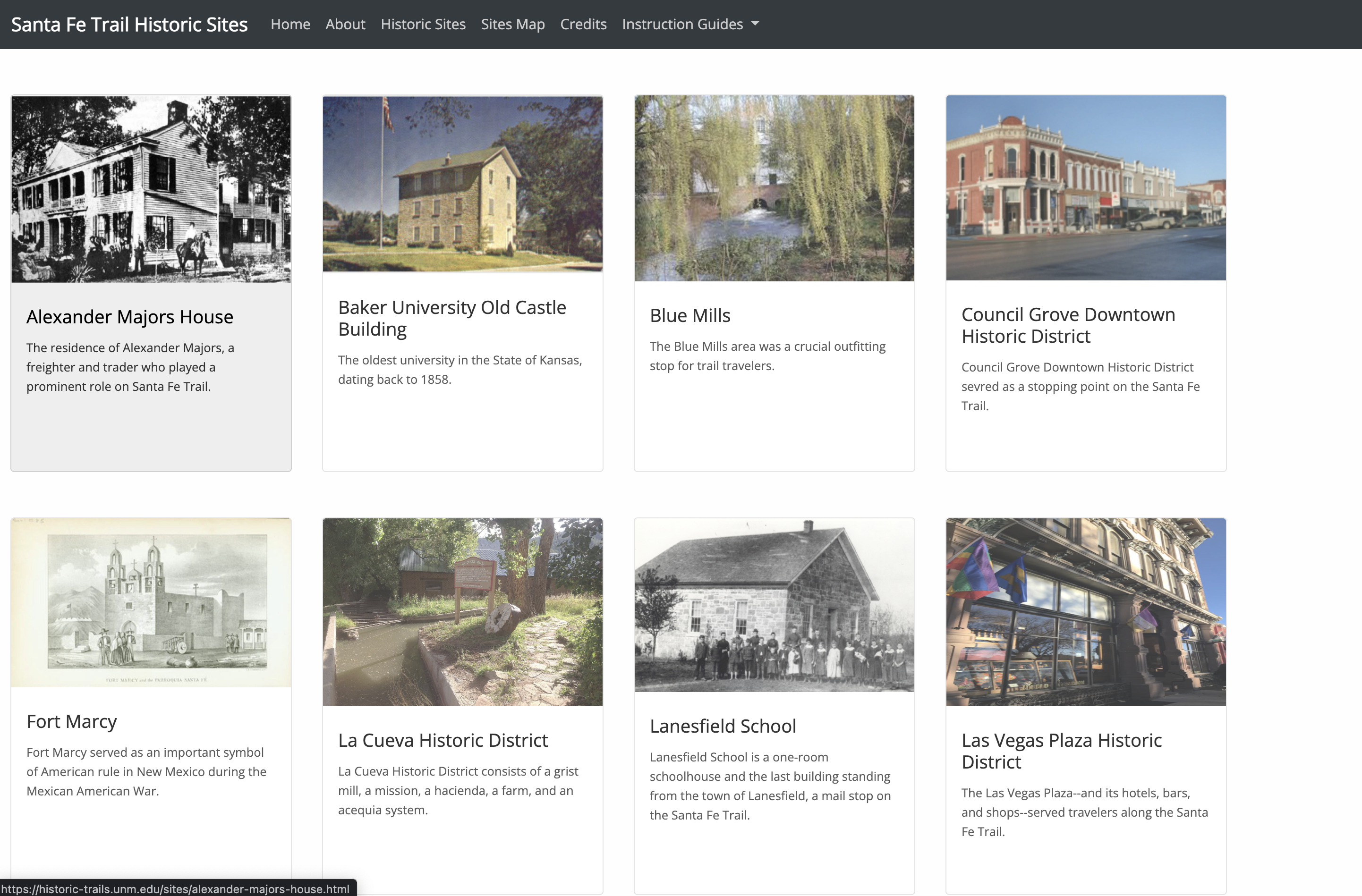

Xanthan-based examples

Below are a few sample projects built with Xanthan. Students constructed every single page in these sites, and learned how to collaboratively build a website with plain text files and GitHub Pages, all brought together by Xanthan.

Isn’t there more?

Listed above are my most active projects. For the morbidly curious, you can also read about older project work